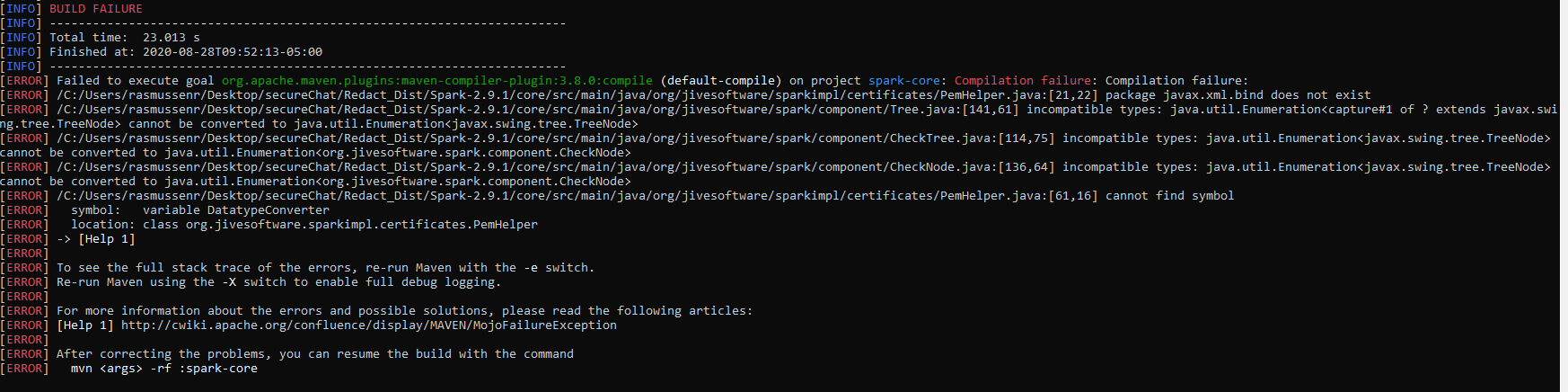

On compiling Spark 2.9.1 with OpenJDK 11.0.1, I run into an incompatible types error, shown below. I was able to get it to compile by making source code changes in

tree.java: line 147;

for (Enumeration e = node.children(); e.hasMoreElements(); ) {

changed to

for (Enumeration e = (Enumeration)(object) node.children();; ) {

checktree.java: line 114;

//Enumeration nodeEnum = root.breadthFirstEnumeration();

changed to

Enumeration nodeEnum = (Enumeration)(object)root.depthFirstEnumeration();

Checknode.java: line 136;

//Enumeration nodeEnum = children.elements();

changed to

Enumeration nodeEnum = (Enumeration)(object)children.elements();

This allows it to compile, but causes breaks when trying to use the conference browser tab.

Has anyone encountered this problem, and if so is there a solution?

below is my error log for reference