What does spark need installed on a pc for the spell check to work? I have some pcs where the spell check is available and some where it is not. Thanks!

Hi pf2k,

There doesn’‘t have to be anything installed on the PC (other than Spark, of course ) for the spell check to work. If it’'s not working there are two things to check:

-

In Spark, go to Spark menu, select Preferences and in General Chat Settings page make sure the “Perform spell checking in background” option is selected.

-

The spell checker is actually a plugin so navigate to where Spark is installed (most likely C:\Program Files\Spark) and then go into the plugins directory and check to make sure the spelling-plugin.jar is present. If it’'s not you can try copying the file from another machine that has it, and then restart Spark.

Hope that helps,

Ryan

Okay I didn’‘t think it depend on any external components…I can’'t figure out why on so many machines the spell check is not working. The button to do a spell check manually is not even there…I try your suggestions. Thanks again.

quick update…the machines have three plugins listed same as my machines which the spell checking does work. but i noticed my pc also has three folders in the plugins folder named the same as the plugins. the machines that the spell checking does not work does not have those three folders…

Message was edited by: pf2k

Well I just exported a list of all the computers and using the excellent psexec and a batch file I am in the process of copying the necessary folders over to the computers that need them. I have no idea why it’'s not on some of them but still thanks for the answer!

The directories are actually expanded versions of the .jar files which Spark automatically creates when it starts up. If I had to take a guess I’'d say there might be permissions error that is preventing Spark from creating those directories. Are there any errors in the log directory?

Thanks,

Ryan

It actually was that. The users were limited users on xp pro workstations and did not have permissions to create the folders. Thanks for pointing me in the right direction earlier.

You’'re welcome. Good to hear you got everything straightened out.

I have non-admin/plain user level users in most of my environment & their plug ins such as spellcheck do not work. They are also not able to additional plug ins such as the translator. Yes, I know they do not have rights to C:\Program Files\Spark\plugins, I also do not want to manually copy the directories to every single machine, including remote laptops. This will only become a bigger problem as time goes on & more companies run their local users as non-administrators. What is the fix for this?

Thank you

I created a batch file that took for input a list of computers and used xcmd. You could also use psexec to either set permissions on the folder so that the next time the user starts spark the plugins expand or you could copy over the needed folders.

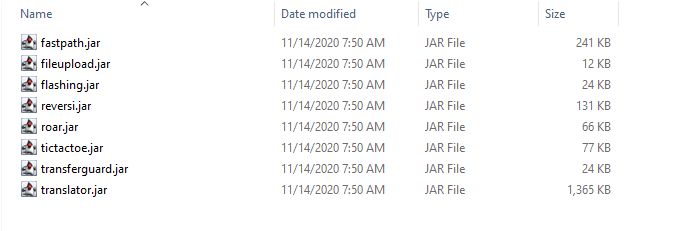

Before I start a new thread, having the same problem, but different. I also have a number of machine (100+) where spellchecker is fine, but I have good number where it is just missing under File->Preferences … just not there. My Spark\plugins folder for the working units is the exact same as those not working … a screen grab if said folder is attached

shoot … thought this was Dec 6th THIS year … not 15 years ago … guess these needs a new thread

Nope, we’re now committed to reviving a 15-year-old thread

Are all your users using the same version of Spark? Are they all using the same version of Java? Spellchecking is a plugin-provided feature. That plugin requires Spark 2.7.0 (or later) and Java 1.7 (or later).

Thanks to @guus this plugin it now works.

You can download the latest nightly build and try using it.

Everyone is running 2.9.4 with JRE … looking at two machines where SpellCheck works on one but not another, from CMD I ran >java -version and got the same for both

openjdk version “1.8.0_212”

OpenJDK Runtime Environment (AdoptOpenJDK)(build 1.8.0_212-b03)

OpenJDK 64-Bit Server VM (AdoptOpenJDK)(build 25.212-b03, mixed mode)

Neither have a spellcheck.jar (or any variation) under plugins

N.B. - 2007, the year of the birth of this thread … Steve Jobs introduces the iPhone

Spark expands plugins to a directory in the user’s home folder. I’m using Ubuntu myself, and have a little trouble figuring out what the correct folder under Windows is, but I think it is C:\users\USERNAME\appdata\roaming\Spark\ (or something very similar to that). This directory should hold a ‘plugins’ directory, in which all kind of subdirectories are present, one for each plugin.

Maybe there’s a difference between those directories, on your users’ computers?

If the spellcheck jar file was present at some point, but got removed from Spark in a later update, then some users might still have the old expanded folder in their user directories, while other users (which didn’t get an update, or which didn’t use this particular computer before), do not.

ding ding and DING we have a winner. For the users that don’t have spell check I just drop the .jar file into `C:\Users\USERNAME\AppData\Roaming\Spark\plugins’ and it worked. There was one user who had a different .jar file for this (based on file size), I just removed the .jar file and deleted ‘C:\Users\USERNAME\AppData\Roaming\Spark\plugins’ folder before dropping in the correct (newer?) .jar file and that fixed that one.

Thanks you for the help … many many “whose line is it anyways” points for you … and some “@midnight” points as well.

post wouldn’t let me edit it, but to be clear “I just removed the .jar file and deleted ‘C:\Users\USERNAME\AppData\Roaming\Spark\plugins’ folder before dropping” should read “I just removed the .jar file from ‘C:\Users\USERNAME\AppData\Roaming\Spark\plugins’ folder before dropping”